The 12 Coding Patterns That Cover Most of 2026 FAANG Questions

A complete reference: 12 algorithmic patterns, the signals that tell you which one fits, and where each shows up in 2026 loops.

Coding interviews look chaotic from the outside.

A candidate could be asked about strings, arrays, trees, graphs, dynamic programming, system constraints, or something the interviewer invented yesterday.

The space of possible questions feels infinite.

Most candidates respond to this perceived chaos by drilling LeetCode problems by topic: 50 array problems, 50 tree problems, 50 dynamic programming problems. They build problem-coverage by volume.

This approach is inefficient and often ineffective.

The actual coverage of FAANG coding interviews is not infinite.

Based on analysis of widely-reported FAANG questions across Meta, Google, Amazon, Apple, and Netflix from 2024 through 2026, combined with structural analysis of LeetCode’s most-frequented Top 200 list and the questions that appear in candidate-reported feedback, the same small set of algorithmic patterns recurs in approximately 80 percent of interview problems.

The remaining 20 percent are either pattern combinations or genuinely novel problems, but those are rare.

Understanding the 12 patterns below changes how you prepare.

Instead of memorizing 200 problems, you learn 12 techniques deeply.

When a new problem appears in an interview, you do not have to remember whether you solved it before.

You ask yourself: which pattern does this fit?

Then you apply the pattern.

This post is the reference. For each pattern, you get:

The pattern itself, named and defined

The signal in the problem statement that tells you to reach for it

One canonical example with the core technique

Where it shows up most often in FAANG loops in 2026

How to Read This Reference

The patterns are ordered by approximate frequency in FAANG coding rounds, with the most common first.

If you have limited prep time and have to choose, work the top 5 in order. They alone cover roughly 50 to 60 percent of questions.

For each pattern, the most important section is “the signal.”

This is the specific phrase or constraint in a problem statement that tells you the pattern applies.

Strong candidates recognize patterns from problem signals within the first minute, which is what allows them to start solving without flailing.

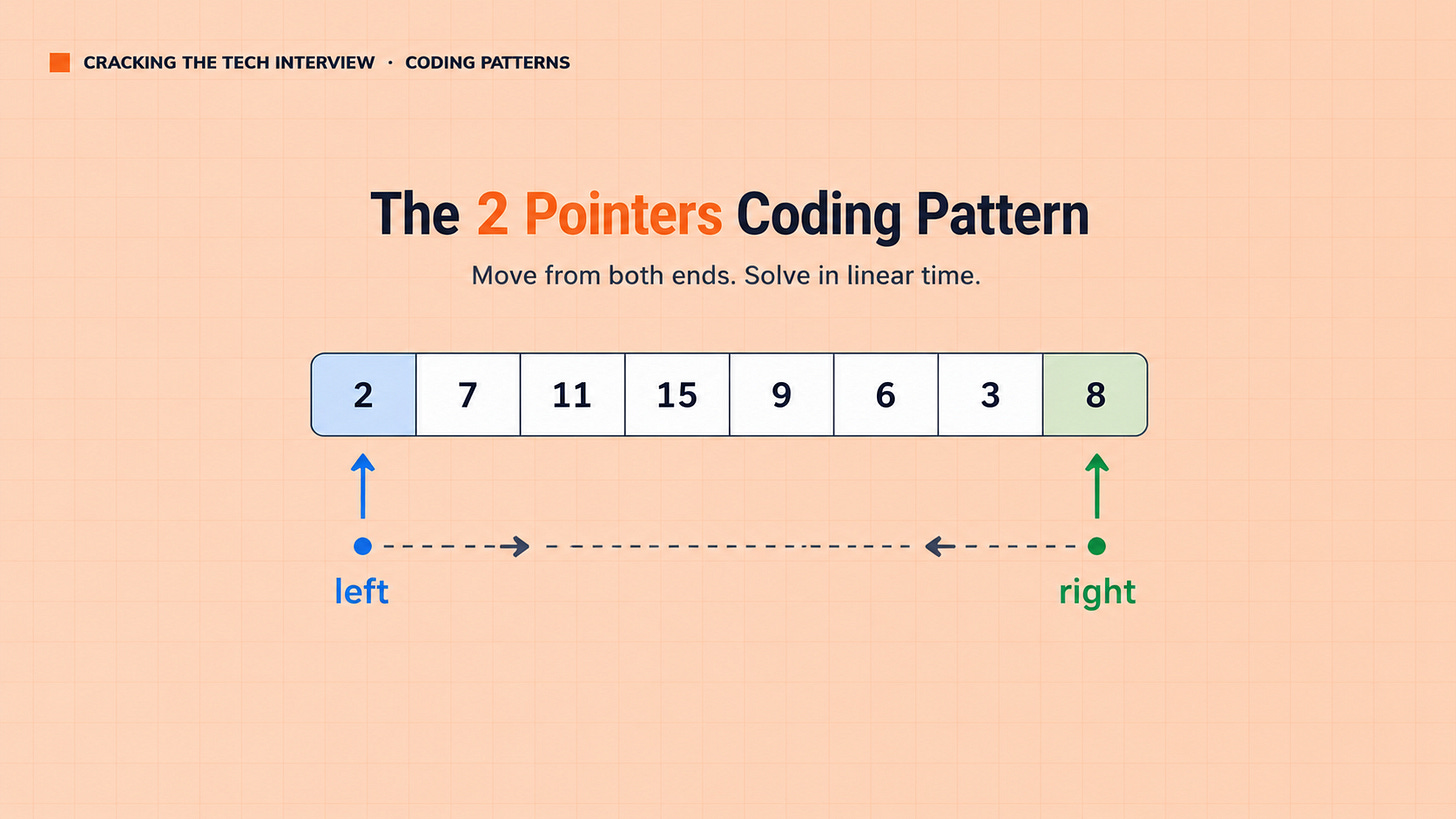

Pattern 1: Two Pointers

What it is: Use two indices that move through an array or string, typically toward each other or in the same direction, to solve problems in O(n) time that would otherwise require O(n²) nested loops.

The signal: The problem mentions a sorted array, asks for pairs or triplets that satisfy some condition, or asks you to compare two ends of a sequence. Words to watch for: “sorted,” “pair,” “find two,” “palindrome,” “container.”

Canonical example: Given a sorted array, find two numbers that sum to a target. Place one pointer at the start, one at the end. If the sum is too large, move the right pointer left. If too small, move the left pointer right. O(n) time, O(1) space.

Where it shows up at FAANG in 2026: Meta uses two pointer problems in roughly 30 to 40 percent of E4-E5 coding rounds. Google and Amazon use them slightly less but consistently. The technique is foundational and shows up in compound problems where it’s a sub-step.

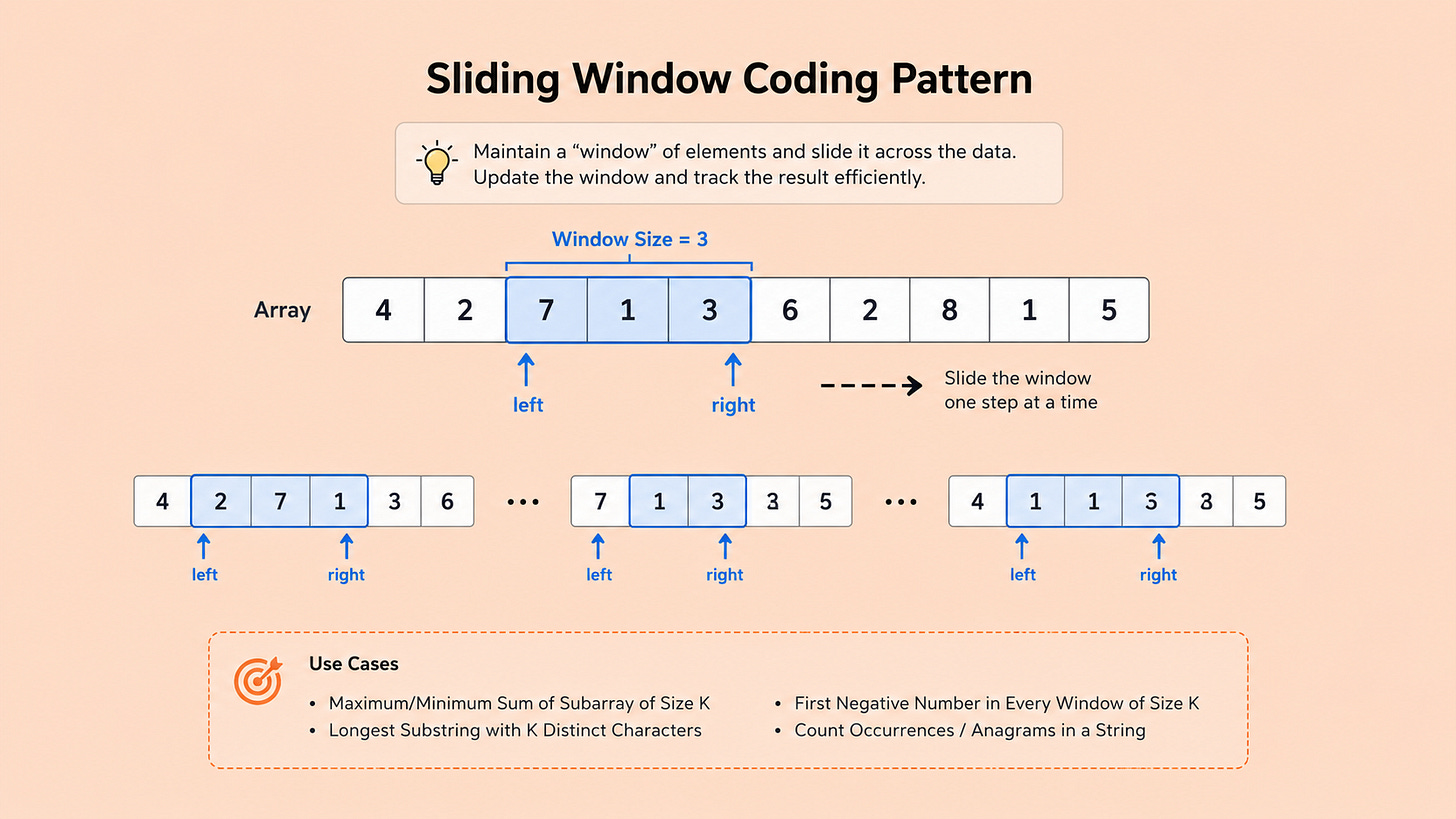

Pattern 2: Sliding Window

What it is: Maintain a “window” over a contiguous subarray or substring, expanding from one side and contracting from the other based on whether the window satisfies some condition. The classic application is finding the longest or shortest subarray that meets a constraint.

The signal: “Contiguous” subarray or substring. Words like “longest,” “shortest,” “smallest,” “largest” combined with “window,” “subarray,” or “substring.” Constraints like “sum at least K” or “no repeating characters” applied to a contiguous segment.

Canonical example: Find the longest substring with no repeating characters. Maintain a window with a hash set tracking characters in it. Expand from the right, adding characters. When you hit a duplicate, contract from the left until the duplicate is gone. Track the max window size.

Where it shows up at FAANG in 2026: Heavily used at Meta and Amazon. Google uses it less for traditional sliding window but more often for variable-window variants. Sliding window questions are a personal favorite of Meta interviewers because they distinguish candidates who recognize the pattern from those who try O(n²) brute force.

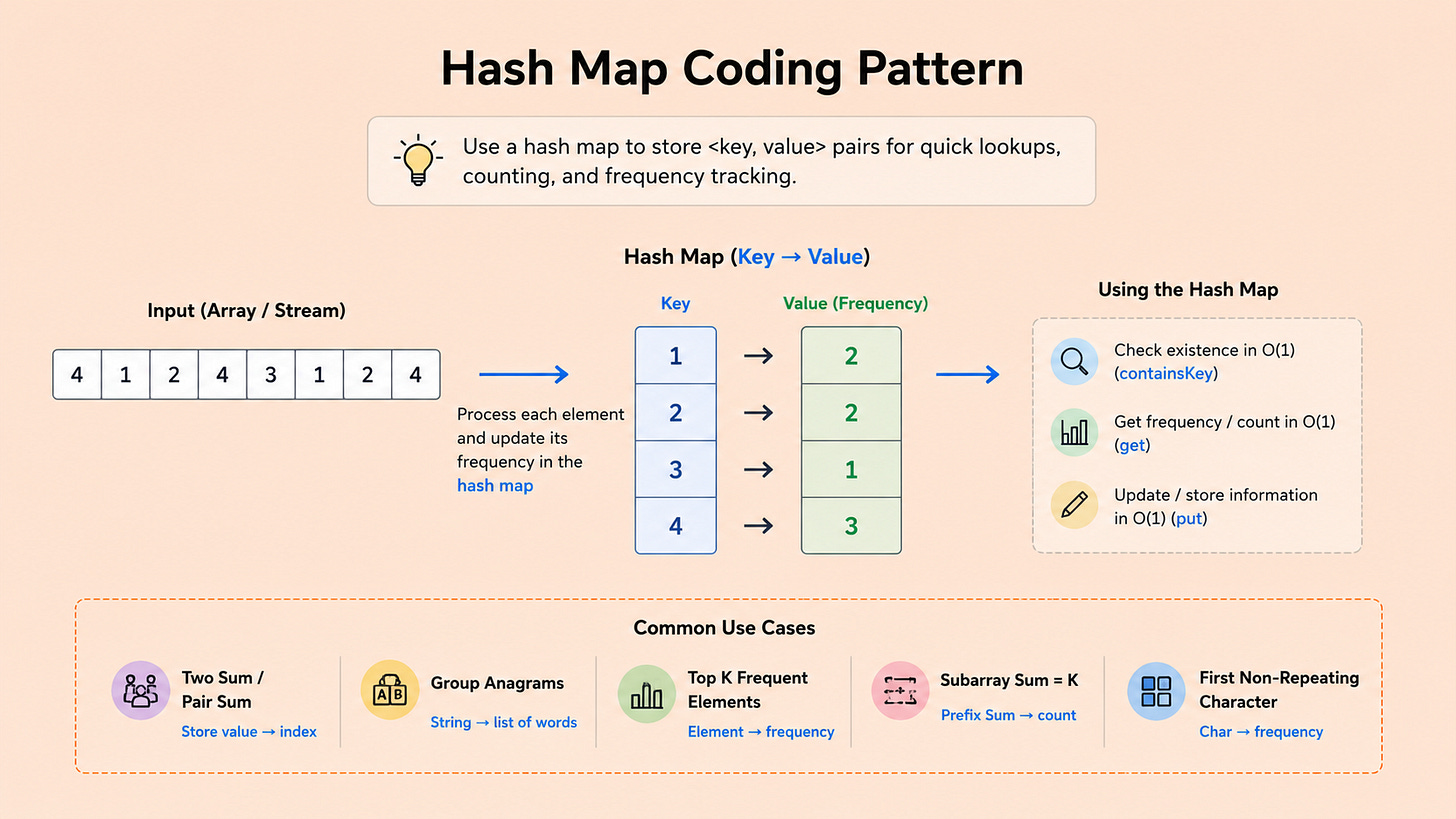

Pattern 3: Hash Map / Hash Set Lookup

What it is: Use a hash table to convert what would be an O(n²) search into O(n) by precomputing or caching seen values. The pattern applies whenever you need to know “have I seen X before” or “what’s the value associated with X.”

The signal: The problem requires checking membership, counting frequency, or finding the complement of a value. Words to watch for: “count,” “find,” “first occurrence,” “duplicate,” “unique,” “frequency.”

Canonical example: Given an array, find the first duplicate. Walk the array. For each element, check if it’s in a hash set. If yes, return it. If no, add it to the set. O(n) time, O(n) space.

Where it shows up at FAANG in 2026: This is the most common pattern by raw count, but it’s often a sub-step within a larger problem rather than the entire problem. Hash maps appear in roughly 50 to 60 percent of FAANG coding solutions. The standalone hash-map-only questions are usually warm-up rounds at smaller companies; FAANG tends to compose hash maps with other patterns.

Pattern 4: Binary Search

What it is: Repeatedly halve the search space by checking the middle element and discarding the half that cannot contain the answer. Most candidates know binary search on a sorted array. The harder variant, which FAANG interviewers favor, is binary search on the answer space itself.

The signal: Sorted input, or any monotonic property. Words to watch for: “sorted,” “find,” “minimum/maximum that satisfies,” “smallest value such that,” “Kth smallest.” Also any problem where you can binary search the answer (the answer space is bounded and a “feasibility” check exists for any candidate value).

Canonical example (basic): Find an element in a sorted array. Standard binary search. O(log n).

Canonical example (advanced): Find the smallest divisor such that the sum of array values divided by it is at most a threshold. Binary search the divisor space. For each candidate, check feasibility in O(n). Total O(n log range).

Where it shows up at FAANG in 2026: Binary search on the answer is increasingly common at Google and Meta L5+ coding rounds. The basic sorted-array variant shows up at all levels. A candidate who recognizes the harder variant when the problem statement does not explicitly mention sorting signals strong instincts.

Pattern 5: Tree Traversal (DFS and BFS)

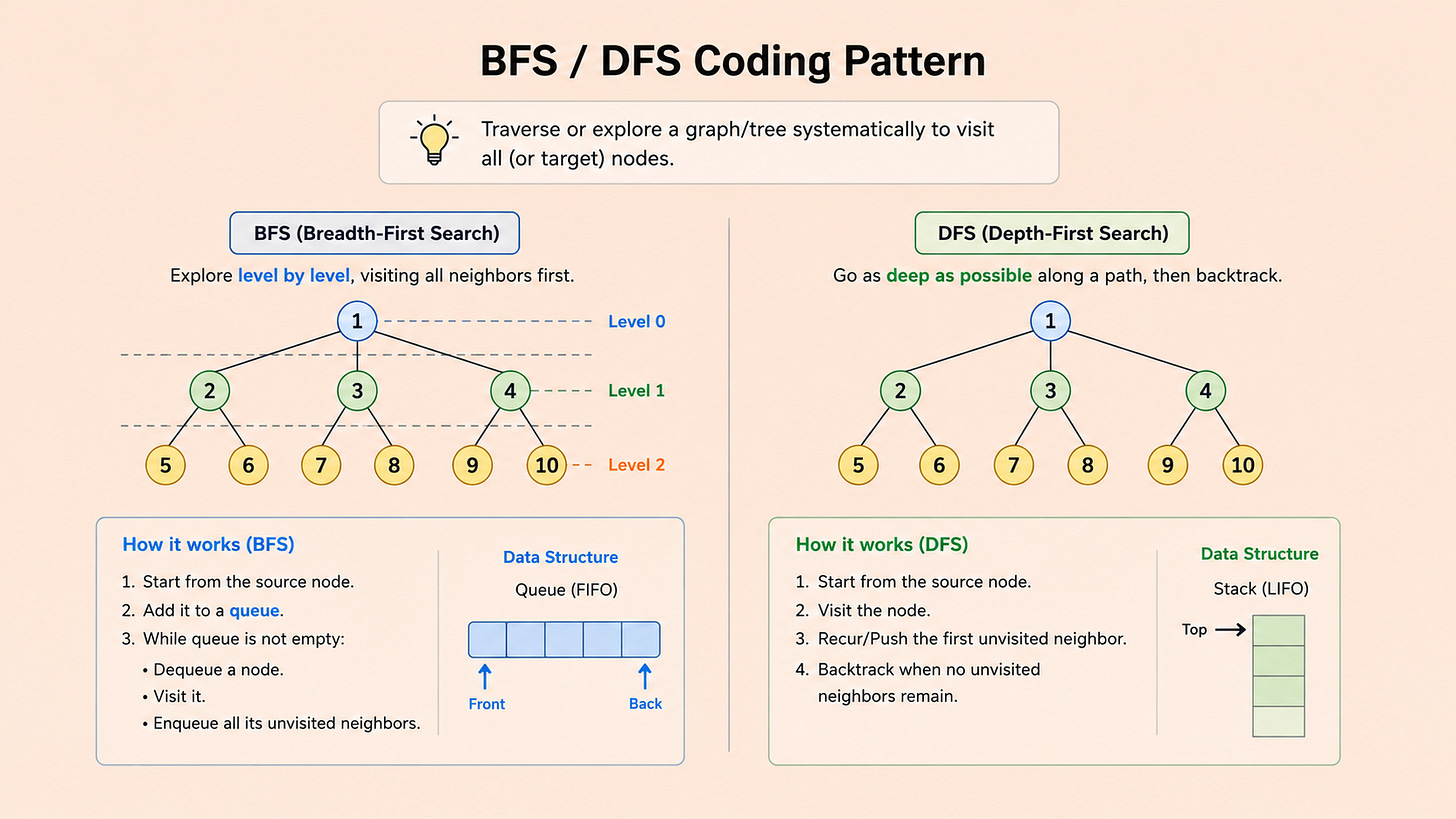

What it is: Walk through a tree or graph using either depth-first search (recursive or stack-based) or breadth-first search (queue-based). Each variant has standard applications: DFS for paths, structures, and recursion; BFS for shortest paths and level-order operations.

The signal: The problem involves a tree, a graph, or any hierarchical/connected structure. Words to watch for: “tree,” “graph,” “ancestors,” “descendants,” “connected,” “shortest path” (BFS), “all paths” (DFS), “level,” “deepest.”

Canonical example (DFS): Given a binary tree, find the maximum depth. Recursive DFS. For each node, return 1 + max(depth of left, depth of right).

Canonical example (BFS): Given a binary tree, return its level-order traversal. Queue-based BFS. For each level, dequeue all current nodes, record their values, enqueue their children.

Where it shows up at FAANG in 2026: Tree problems are ubiquitous. Roughly 25 to 35 percent of FAANG coding rounds include a tree traversal question. Amazon and Apple favor recursive DFS; Meta and Google use both DFS and BFS depending on the variant.

Pattern 6: Dynamic Programming

What it is: Break a problem into overlapping subproblems and store their solutions to avoid recomputation. Most DP problems can be expressed either top-down (recursion with memoization) or bottom-up (iterative table filling). The hard part is recognizing the recursive structure.

The signal: The problem asks for an optimal value (max, min, count of ways) where the answer to the full problem can be expressed in terms of answers to smaller versions of itself. Words to watch for: “minimum cost,” “maximum value,” “number of ways,” “longest,” “shortest,” “can you reach.”

Canonical example: Climbing stairs. You can take 1 or 2 steps at a time. How many distinct ways to reach step n? f(n) = f(n-1) + f(n-2), with f(0) = 1, f(1) = 1. Bottom-up: fill an array of size n+1.

Where it shows up at FAANG in 2026: Google uses DP heavily. Meta uses it less in 2026 than in 2022 (the AI-assisted coding round at Meta has shifted some DP problems to non-AI rounds, but they still appear). Amazon and Apple use DP variably depending on the team. DP is the highest-difficulty pattern and the one where preparation gap is most visible.

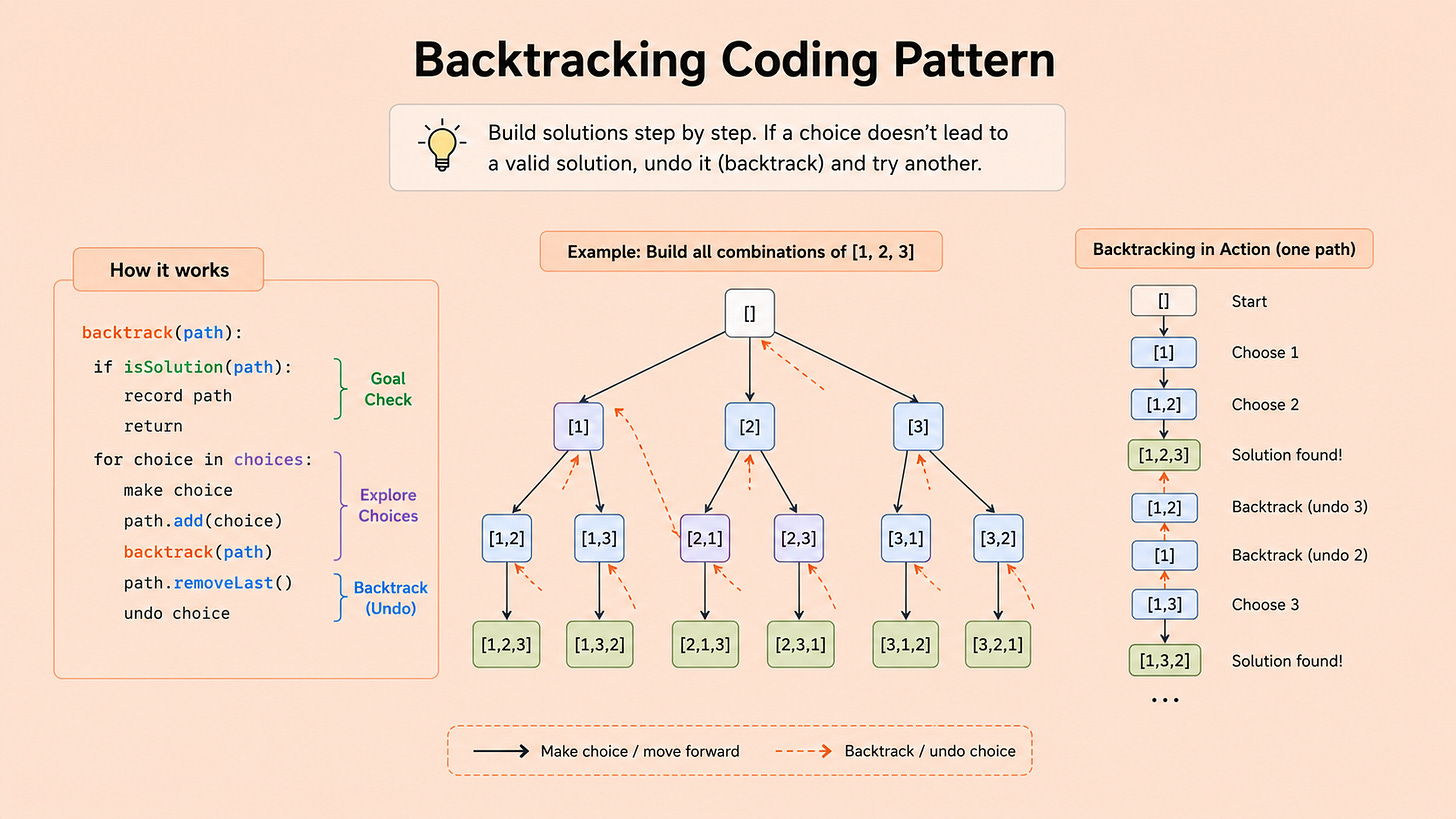

Pattern 7: Backtracking

What it is: Build candidate solutions incrementally and abandon (backtrack from) candidates that cannot lead to a valid solution. The technique is recursive: try a choice, recurse, undo the choice if it fails, try the next choice.

The signal: The problem asks for all possible solutions, all permutations, all combinations, or all valid configurations. Words to watch for: “all,” “permutations,” “combinations,” “subsets,” “generate all,” “N-queens,” “Sudoku.”

Canonical example: Generate all permutations of a list of distinct integers. For each position, try each remaining element. Recurse with the chosen element added. After recursion, remove it.

Where it shows up at FAANG in 2026: Less frequent than the top 5, but a standard part of the question rotation. Particularly common at Meta and Google. Backtracking problems are time-pressure tests; candidates who code them slowly often fail to finish in 45 minutes, even if they recognize the pattern.

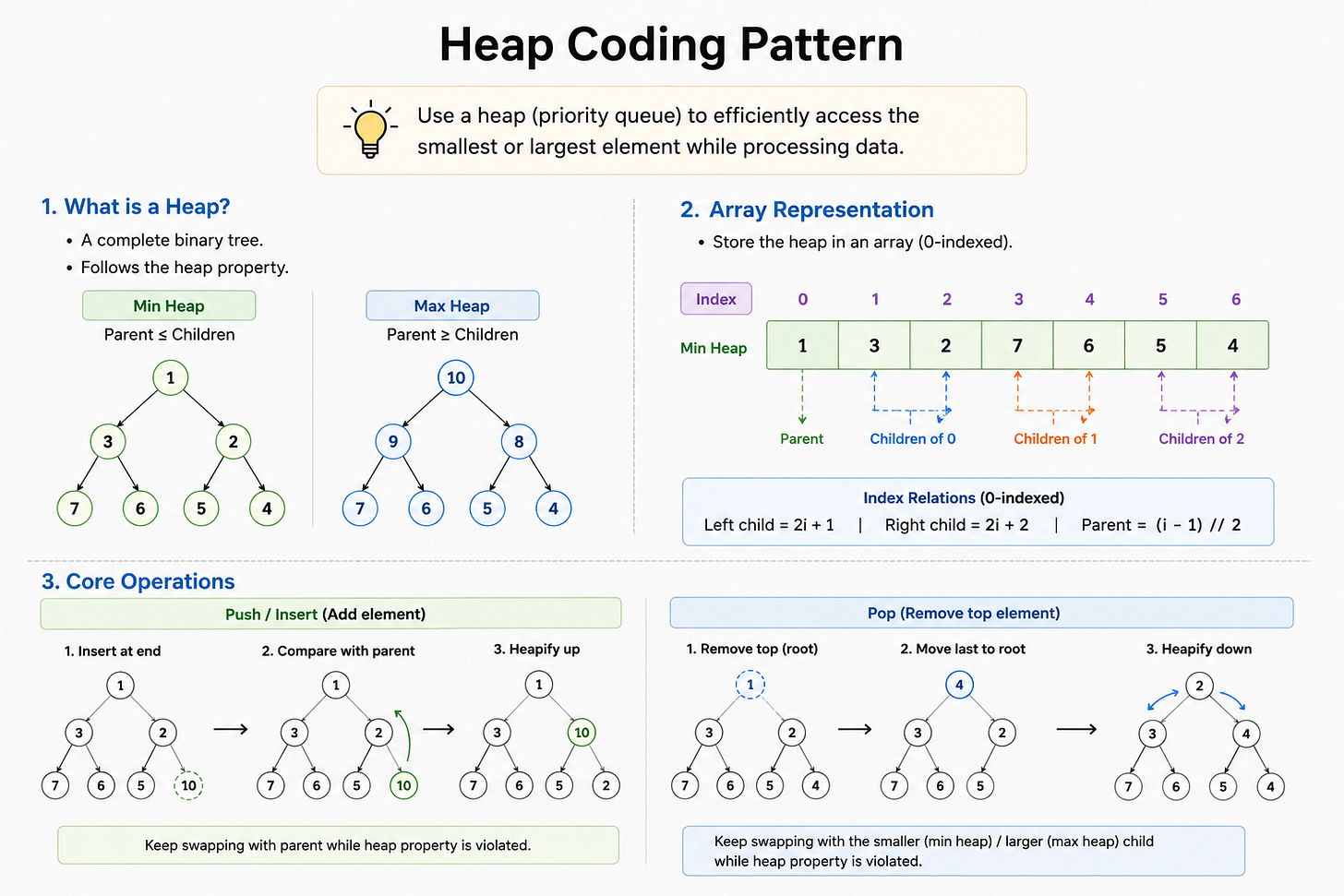

Pattern 8: Heaps / Priority Queues

What it is: Use a heap data structure to efficiently track the smallest or largest K elements, or to repeatedly process the highest-priority item. Min-heaps for finding maximums (counterintuitively); max-heaps for finding minimums.

The signal: “Top K,” “smallest K,” “Kth largest,” “median of a stream,” “merge sorted lists.” Anything where you need ongoing access to extreme values without sorting the full collection.

Canonical example: Find the Kth largest element in an array. Maintain a min-heap of size K. For each element, if the heap is smaller than K, push. Otherwise, if the element is larger than the heap’s minimum, pop and push. After the array is processed, the heap’s minimum is the Kth largest.

Where it shows up at FAANG in 2026: Heaps appear in roughly 10 to 15 percent of FAANG coding rounds. Common at Amazon and Google. The “Kth largest in a stream” variant is asked frequently because it tests whether the candidate understands why a heap is the right data structure (not a sorted array, not a hash map).

Pattern 9: Graph Algorithms (Topological Sort, Union-Find, Shortest Path)

What it is: A family of algorithms for problems involving directed dependencies, connectivity components, or shortest paths in weighted graphs. The three main techniques are topological sort (for directed acyclic graphs), union-find (for connectivity), and shortest path algorithms (Dijkstra, Bellman-Ford).

The signal: The problem describes dependencies, prerequisites, courses to take, tasks to schedule, components of a connected structure, or distances in a weighted graph. Words to watch for: “prerequisite,” “schedule,” “depends on,” “connected components,” “shortest distance” with weights.

Canonical example: Course schedule problem. Given courses with prerequisites, return whether you can finish all courses. Build a directed graph, check for cycles using DFS or Kahn’s algorithm. If no cycle, all courses can be finished.

Where it shows up at FAANG in 2026: Less frequent than coding patterns but very high signal when they appear. Google and Amazon ask graph algorithm questions in roughly 10 to 15 percent of senior loops. Topological sort is the most common variant. Union-find appears at the senior level for connectivity-based problems.

Pattern 10: Linked List Manipulation

What it is: Modify pointers in a linked list to reverse it, find the middle, detect cycles, or merge two lists. The pattern uses the “fast and slow pointer” technique frequently.

The signal: The input is explicitly a linked list. Words to watch for: “linked list,” “reverse,” “merge,” “cycle,” “middle node,” “intersection.”

Canonical example: Detect a cycle in a linked list (Floyd’s algorithm). Use a slow pointer (one step at a time) and a fast pointer (two steps at a time). If they ever meet, there’s a cycle. If fast reaches null, no cycle.

Where it shows up at FAANG in 2026: Linked list questions appear in roughly 10 to 15 percent of FAANG coding rounds. The pattern is consistent across companies. A candidate who fumbles linked list pointer manipulation in 2026 is signaling rust on fundamentals, which interviewers note negatively.

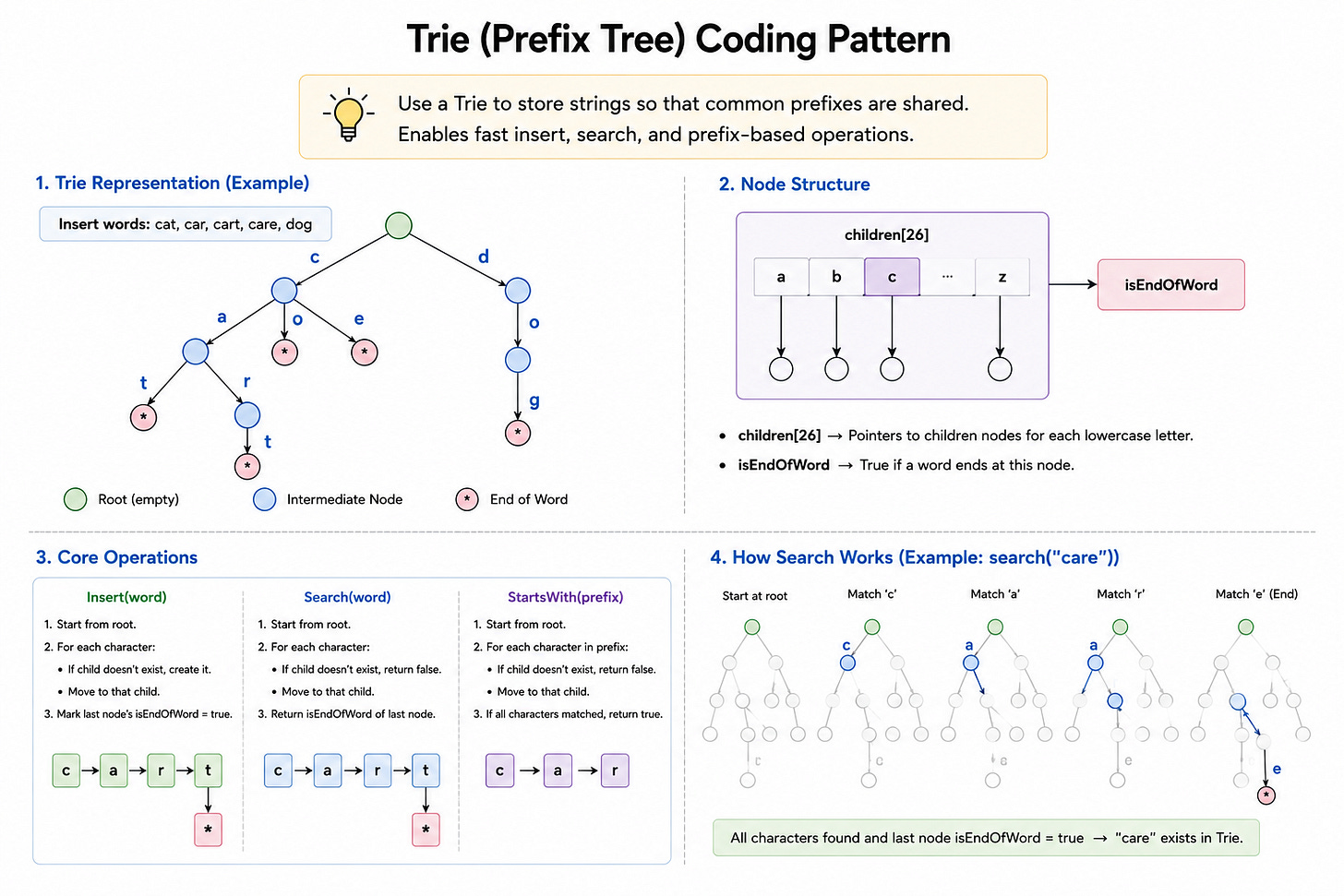

Pattern 11: Trie (Prefix Tree)

What it is: A tree-like data structure for storing strings such that prefixes are shared. Trie operations (insert, search, prefix search) are O(L) where L is the string length, regardless of how many strings are stored.

The signal: Word lookup, prefix matching, autocomplete, dictionary problems. Words to watch for: “prefix,” “autocomplete,” “word search,” “dictionary,” “starts with.”

Canonical example: Implement an autocomplete system. Build a trie from the dictionary. For a given query prefix, traverse to the prefix node and return all words below it.

Where it shows up at FAANG in 2026: Less common, but a recognized pattern that high-ranked candidates are expected to know. Roughly 5 to 10 percent of FAANG coding rounds at L5+ ask trie problems. Google uses trie problems more than the others, especially for search-related teams.

Pattern 12: Bit Manipulation

What it is: Solve problems by operating directly on the binary representation of integers. Common operations: XOR for finding unique elements, bit shifts for multiplication and division, bitmasks for tracking subset membership.

The signal: The problem mentions binary, XOR, single number, missing number from 0 to N, or bitwise operations explicitly. Words to watch for: “binary representation,” “XOR,” “bits,” “set,” “unset.”

Canonical example: Given an array where every element appears twice except one, find the unique element. XOR all elements. Pairs cancel out. Result is the unique value. O(n) time, O(1) space.

Where it shows up at FAANG in 2026: The least common of the 12, but distinctive. Roughly 3 to 5 percent of FAANG coding rounds include a bit manipulation question. Google asks them slightly more than the others. A candidate who recognizes the XOR-based solution to a “single number” problem signals strong instincts.

How These Patterns Combine in Real Interviews

The single biggest gap between candidates who study patterns in isolation and candidates who succeed in interviews is the ability to recognize when a problem requires combining patterns.

Examples of pattern combinations seen in 2026 FAANG loops:

Sliding window plus hash map. “Find the longest substring with at most K distinct characters.” Sliding window for the substring, hash map to track character counts in the window.

Binary search plus greedy. “Minimum capacity to ship packages within D days.” Binary search the capacity, greedy check whether each capacity is feasible.

DFS plus memoization (which is DP). “Number of distinct paths in a grid with obstacles.” DFS to enumerate paths, memoization to cache subproblem results.

BFS plus priority queue (which is Dijkstra). Shortest path in weighted graph. BFS visits nodes; priority queue ensures visiting in order of accumulated weight.

In FAANG interviews in 2026, roughly 30 to 40 percent of problems require recognizing that two patterns combine.

Candidates who memorize patterns in isolation often miss these combinations because they look for “the one pattern” the problem fits, when the answer is “two patterns layered together.”

The fix is practice with annotated solutions.

After solving a problem, identify which patterns were used.

Track combinations explicitly.

Over 50 to 80 problems, you’ll start to recognize that certain pattern pairs co-occur often.

What This Means for Your Prep

If you have a FAANG coding loop scheduled in the next 30 to 90 days, here’s how to use this reference.

Audit your pattern coverage. For each of the 12 patterns above, can you write an implementation from scratch in 5 to 10 minutes without looking anything up? If not, that pattern is a coverage gap. The top 5 patterns matter most. If you can’t reliably implement two pointers, sliding window, hash maps, binary search, and tree traversal cold, those are your highest-priority drills.

Practice pattern recognition specifically. Take 30 to 50 LeetCode problems you have not seen. Without solving them, just read the problem statement and write down which pattern (or combination) applies. Check against the official tags or solutions. The goal is to build the “this is a sliding window problem” instinct in under 60 seconds of reading.

Drill the combinations. After solving any problem, ask: which patterns did I use? Where did they combine? Tracking combinations explicitly is what separates candidates who solve novel problems from candidates who can only solve problems they’ve seen before.

Ignore the 20 percent of problems that don’t fit these patterns. They exist, but they’re rare and often less predictable. Spending 20 percent of your prep time trying to cover the long tail produces less value than spending 100 percent of your prep time on these 12 patterns and their combinations.

Where to Go Deeper

For solution-level depth on each pattern, with 8 to 12 worked examples per pattern and full implementations:

Grokking the Coding Interview: Patterns for Coding Questions (Design Gurus) — the foundational course on this approach. Covers each pattern with progressively harder examples.

LeetCode — for raw practice once you understand the patterns. Filter by tag and practice 5 to 10 problems per tag.

NeetCode 150 — a curated list that maps roughly to these patterns, useful for structured practice.